Fast16: The Cyberweapon That Predates Stuxnet by Five Years

Want to learn ethical hacking? I built a complete course. Have a look!

Learn penetration testing, web exploitation, network security, and the hacker mindset:

→ Master ethical hacking hands-on

Hacking is not a hobby but a way of life!

For 21 years, a cyberweapon called fast16 sat completely undetected. This one did not destroy machines or blow things up. It corrupted the math. Scientists running nuclear and engineering simulations got output that looked completely normal, every number added up, every result made sense, and all of it was deliberately wrong. It surfaced last week. It predates Stuxnet by five years.

SentinelOne researchers Vitaly Kamluk and Juan Andrés Guerrero-Saade presented the full analysis of fast16 at Black Hat Asia last week. Fast16’s core binary has a compilation timestamp of August 30, 2005. Stuxnet’s C&C infrastructure was set up in November that same year.

Most people in security know Stuxnet as the worm that destroyed centrifuges at Iran’s Natanz nuclear facility around 2010, by pushing them past their mechanical limits while lying to the monitoring software about what was happening. It was the first known cyberweapon designed to cause physical destruction, and for years it was considered the starting point of this whole era. Fast16 was there first, and for a long time it was the only one.

Kamluk started with a hunch. He had noticed that the most sophisticated state-sponsored malware families he knew about all shared one technical habit: each one had a small scripting engine built in called Lua. Lua works like a remote control for malware, it lets operators change what the implant does while it is already running on a target machine, without needing to send a completely new file. He wanted to know if something older had done the same thing first, and went looking through old collections.

What he found was a file on VirusTotal called svcmgmt.exe, uploaded in October 2016 and flagged by almost nobody. It looked like a boring Windows service wrapper from the XP era. But inside it was an embedded Lua 5.0 virtual machine, encrypted bytecode, and a path pointing to a kernel driver called fast16.sys. That makes fast16 the earliest known Windows malware to embed a Lua engine, predating the next known example by three full years.

One more thing confirms the timeline. Fast16 only runs on single-core processors, built at a time when most machines were still running on a single core and multi-core was just beginning to arrive on the market.

The framework runs in three layers. The outer layer is svcmgmt.exe, a carrier that behaves differently depending on how it is launched. Pass it -p and it spreads across the network. Pass it -i and it installs itself as a Windows service and runs the embedded payload. Pass it -r and it runs the payload without installing. Inside the carrier are three things stored in encrypted form: the Lua bytecode that handles the operational logic, a DLL that hooks into Windows’ dial-up and VPN connection system, and fast16.sys itself. That DLL is worth a closer look. Every time a machine connects to a remote network, it writes the connection details to a named pipe that operators can read. So while fast16.sys was corrupting calculations on disk, the DLL was quietly mapping out which machines were connecting to which networks, giving operators a live picture of the facility’s internal structure.

Part of what makes that outer layer interesting is how it spreads. The mechanism works like a delivery truck with multiple compartments. Each compartment, called a wormlet, can carry a different payload for a different purpose. The carrier copies itself across network shares with weak authentication and starts up as a service on every machine it reaches. SentinelOne calls this cluster munition architecture. In the recovered sample, only one of those compartments is filled. The others are empty, which raises an obvious question about whether other variants exist with different payloads that nobody has found yet.

Before any of this runs, the code checks the registry for security software. If it finds Kaspersky, Symantec, McAfee, F-Secure, Zone Labs, or about a dozen other products that were common in the mid-2000s, it stops immediately. That list was not guesswork. It reflects exactly what the operators expected to find on the machines they were after.

The second layer is the worm, which spreads using standard Windows service control and file-sharing APIs, nothing custom. It relies on weak or default admin passwords on network shares to move from machine to machine, which was a realistic assumption for a lot of internal networks in 2005.

The third layer is fast16.sys, and this is where the sabotage actually happens. A kernel driver sits very deep inside an operating system, below where antivirus software normally looks. Fast16.sys loads at boot and positions itself above every storage layer on the machine: NTFS, FAT, the network filesystem. The first thing it does when it loads is disable the Windows Prefetcher, a system that normally caches frequently-used files to speed things up. With that off, every single file read has to go through the full storage stack, and through the driver. Everything that reads from disk passes through it first. And then it just waits. Nothing happens until someone logs in and the desktop starts. Only then does it begin watching every executable that gets opened.

The driver does not go after every file it sees. It is looking for software built with a specific tool: the Intel C++ compiler leaves a small identifying string in every executable it produces, right after the last section header. The developers knew exactly what compiler their targets used, and built the selection logic around that fingerprint.

For every file that matches, the driver intercepts the floating-point calculation routines in memory as the file is being read from disk. Floating-point calculations are the math behind precision simulations, the kind that tell you whether a bridge design will hold under load, or whether an explosive trigger will detonate at the right moment. The driver patches those routines using 101 pattern-matching rules, injects a block of FPU instructions that quietly shifts values in internal calculation arrays, and lets the file load as if nothing happened. The original code on disk is untouched. The software runs normally. The results are wrong.

Running those 101 rules against software from that era pointed to three specific targets.

The first is LS-DYNA 970, a simulation suite used for modeling explosions, structural failures, and high-speed impacts. The Institute for Science and International Security published a review in September 2024 of 157 academic papers showing that Iranian researchers used LS-DYNA in work connected to nuclear weapons development, specifically modeling the explosive triggers that initiate warhead detonation. If fast16 was running on those machines, the scientists had no way of knowing their results were wrong. Every design decision based on those numbers was built on corrupted output.

The second target is PKPM, and this is the part most coverage misses entirely. PKPM is China’s dominant structural engineering software, developed by Tsinghua University and the China Academy of Building Research and used across Chinese construction projects for over three decades. What makes it more than a standard civil engineering tool is that PKPM is also used for seismic structural analysis of nuclear reactor facilities. A 2024 paper in Advances in Civil Engineering documents the use of PKPM to model the structural behavior of China’s TMSR-LF1 thorium molten salt reactor under earthquake conditions. SentinelOne cannot confirm who the PKPM target was or where fast16 ran. Whether this was aimed at a second target country is left as an open question.

The third is MOHID, an open-source water modeling platform developed at the Instituto Superior Tecnico in Lisbon. It is used for modeling coastal water systems, sediment transport, dam behavior, and environmental impact of large construction projects near water. SentinelOne says openly they cannot identify what the intended sabotage effect on this software would have been, and they are asking the research community for help. Why it was targeted may still be in a sample nobody has found yet.

The NSA connection comes from a list in the ShadowBrokers leak. In April 2017, the ShadowBrokers published a large collection of materials widely understood to have come from the NSA’s Equation Group. Inside was a file called drv_list.txt, basically a do-not-touch list for operators. When a team landed on a target machine and found a driver from that list, it told them whether that driver belonged to a friendly operation and whether they should leave it alone. It was a system for making sure different teams did not accidentally interfere with each other’s work.

Most entries on that list got a note to be cautious or pull back. Fast16 got something different:

| |

That is one operator telling another: if you find this driver, do not touch it, it is ours. Researchers at CrySyS Lab noticed this entry when they analyzed the ShadowBrokers dump in 2018 and had no sample to connect it to. Eight years later, there is one. The ShadowBrokers materials are widely linked to the NSA’s Equation Group, though as with all intelligence leaks, the full picture is not available from the outside.

One more thing in the code stands out. The source files contain version control markers that come from Unix development environments of the 1970s and 1980s, long before Windows existed. They look like this:

| |

That kind of notation, called SCCS/RCS, is the equivalent of finding a rotary phone in a modern office. Nobody uses it in 2005 Windows kernel code unless their programming background goes back decades, to government and military computing environments from a completely different era. These are not weekend hackers or freelancers. This is a long-running institutional program built by people who spent their careers in very specific places.

What makes all of this worse is the detection record. Svcmgmt.exe was uploaded to VirusTotal in October 2016 and sat there for nearly a decade, completely in the open. One antivirus engine out of roughly seventy flagged it, weakly, as generally malicious. A self-propagating carrier that deploys a boot-level kernel driver with an in-memory floating-point patching engine had been sitting in a public database for nine years, almost invisible to every scanner that looked at it.

During his analysis, Kamluk used Claude to help analyse fast16 and write up the findings. At one point the AI repeatedly failed to finish a report he had asked it to write. When he asked why, Claude produced paragraphs of self-criticism, urging itself to just get it done. It eventually did, and concluded that whoever built fast16 had intimate knowledge of the target software and that industrial sabotage was the most likely intention. A 21-year-old piece of malware stumped a modern AI long enough to make it reflect on its own limitations.

If you work with older simulation software, particularly older versions of LS-DYNA or PKPM from the mid-2000s, SentinelOne has already notified the vendors directly. The recommended action is to verify critical calculation outputs against a completely independent system sitting outside any potentially affected network. If fast16 spread across an entire facility and patched every workstation, a comparison calculation run inside that same network would produce the same wrong output. A machine completely outside that environment would not.

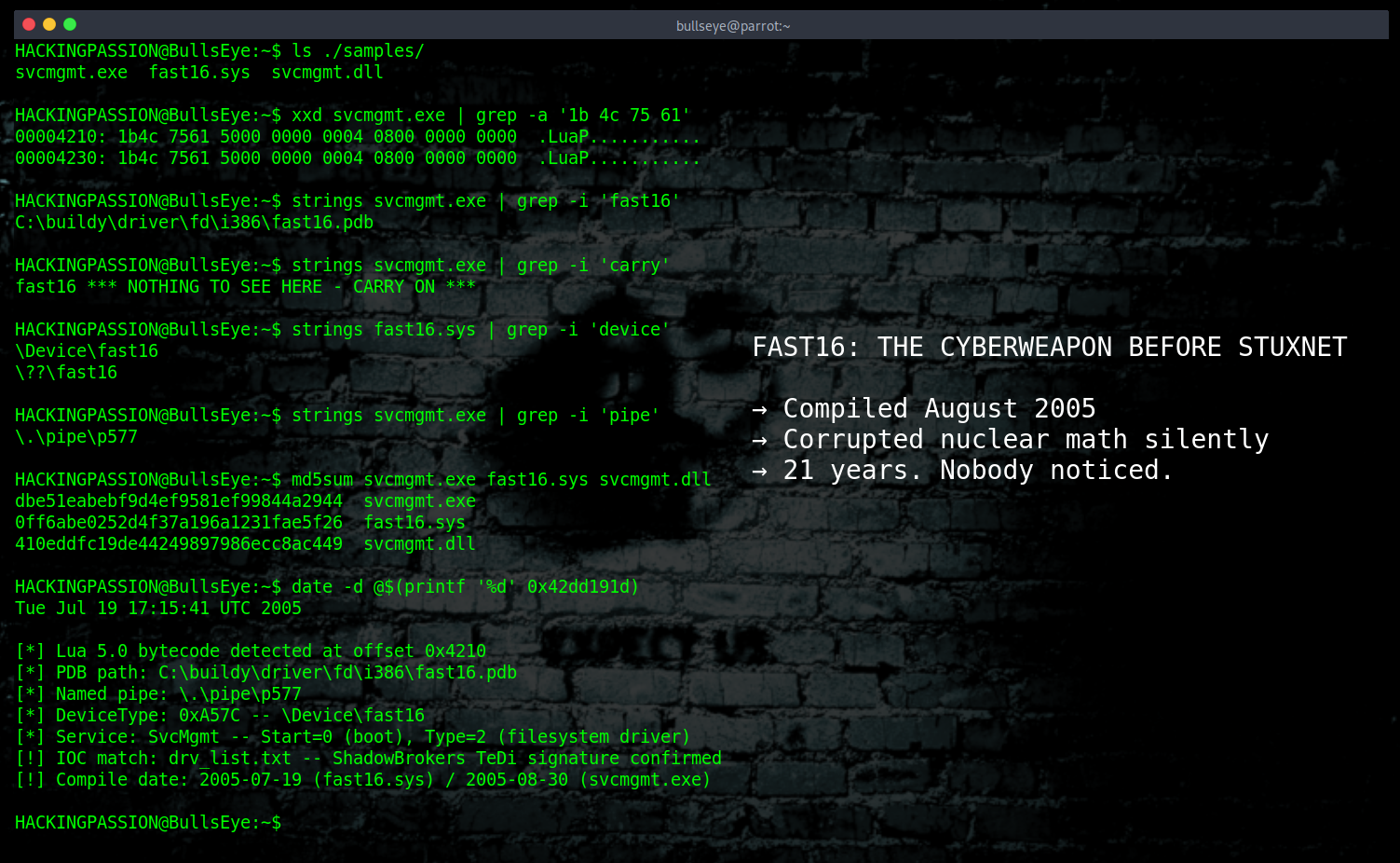

The indicators to look for:

- → Driver:

fast16.sys| MD5:0ff6abe0252d4f37a196a1231fae5f26 - → Carrier:

svcmgmt.exe| MD5:dbe51eabebf9d4ef9581ef99844a2944 - → Notification DLL:

svcmgmt.dll| MD5:410eddfc19de44249897986ecc8ac449 - → Named pipe used for reporting:

\\.\pipe\p577 - → Device objects created by the driver:

\Device\fast16and\??\fast16 - → Custom DeviceType value in the driver:

0xA57C - → Service name installed by the carrier:

SvcMgmt

SentinelOne published full YARA rules for hunting both the carrier and the driver in their research paper.

If something this sophisticated spent 21 years undetected, sitting on VirusTotal for nearly a decade while almost no antivirus engine noticed it, what else is sitting in similar collections right now waiting for someone to ask a different question. Probably more than anyone wants to know.

Fast16 installed itself as a Windows service, spread through network shares, ran as a kernel driver, and stayed completely hidden while it worked. The concepts behind that, exploitation, post-exploitation, persistence, privilege escalation, and moving through a network without being noticed, are exactly what my ethical hacking course covers step by step:

Hacking is not a hobby but a way of life.

Sources: → SentinelOne Labs: fast16: Mystery ShadowBrokers Reference Reveals High-Precision Software Sabotage 5 Years Before Stuxnet → Institute for Science and International Security: Iran’s Likely Violations of Section T

→ Stay updated!

Get the latest posts in your inbox every week. Ethical hacking, security news, tutorials, and everything that catches my attention. If that sounds useful, drop your email below.